Detect Objects from Multispectral Satellite Images using U-Net

Multispectral sensors generally capture image information across several wide-bands of the electromagnetic spectrum (typically 3 to 10). Surfaces of different objects reflect or absorb light and radiation in different ways. The ratio of reflected light to incident light is known as reflectance and is expressed as a percentage. Having multi-spectral data provides additional spectral channels to detect and classify objects in a scene based on its reflectance properties across several bands.

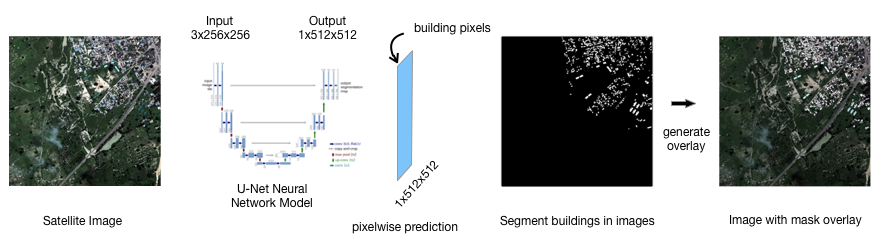

The convolution neural network used was based on the U-Net architecture developed by Ronneberger, et al [3], which is characterized by a U-shaped sequence of traditional CNN contracting layers followed by an equal number of expanding layers with skip connections.

It has been shown to be effective in biomedical semantic segmentation tasks, with small datasets. It has also proved to be equally effective at binary classification of objects in satellite images, using a small dataset.